10 HR predictions for AI in 2026: What CHROs told us

12 CHROs share what's reshaping HR in 2026: AI fluency, coaching, hiring fraud, and the end of cautious piloting.

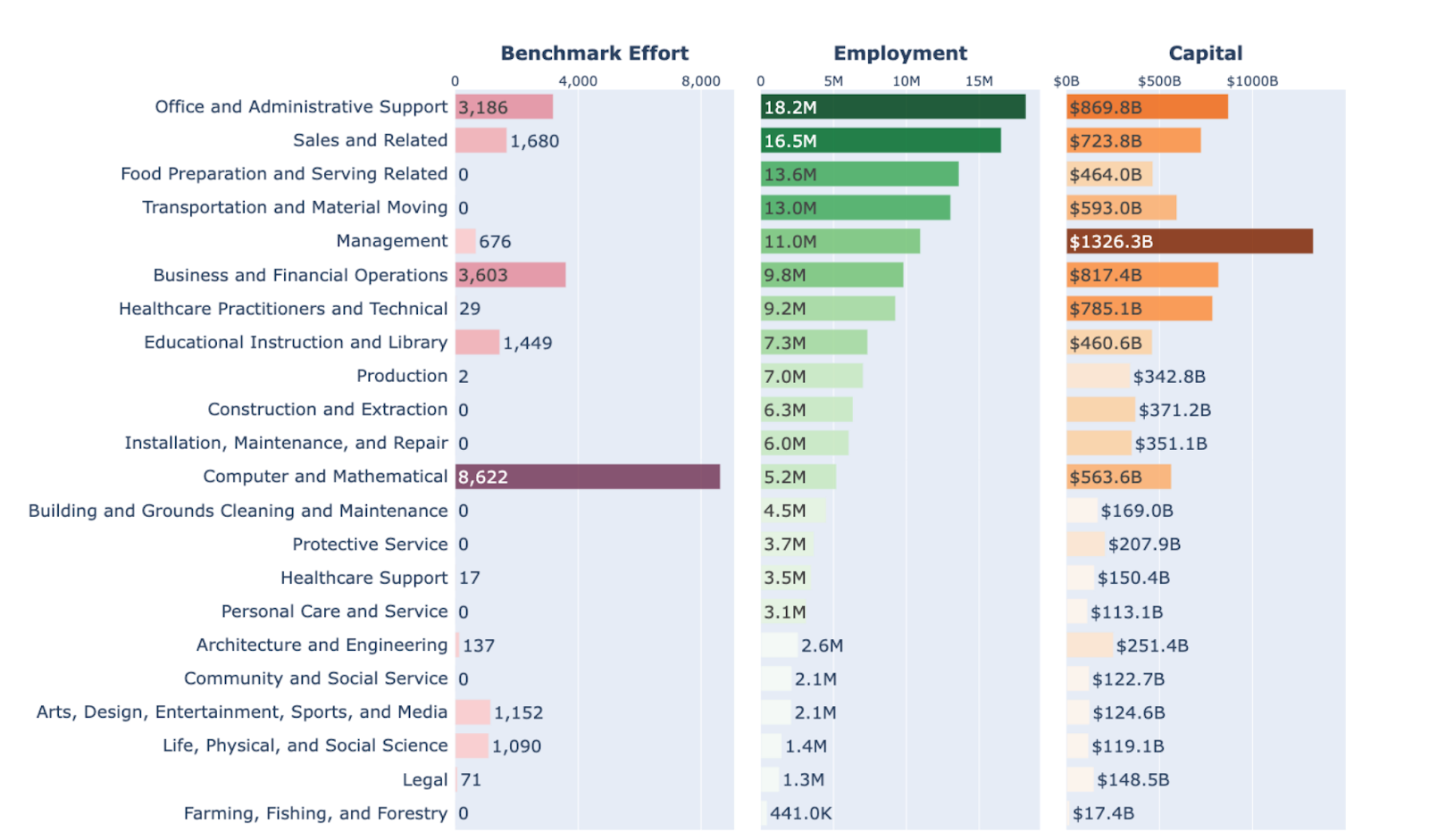

In a recent study, researchers at Carnegie Mellon and Stanford recently mapped 43 AI agent benchmarks and 72,342 tasks against the U.S. occupational taxonomy. Their finding is worth sitting with: the AI most organizations are deploying right now was built to solve problems that represent a fraction of where real work happens.

The researchers used O*NET, the U.S. government's database covering all 1,016 real-world occupations, as their reference point. They measured which domains AI agents are being built and evaluated against, then compared that against where employment and wages are actually concentrated.

The mismatch is significant. AI development clusters almost entirely in computer and mathematical work, primarily programming and technical tasks. That domain represents just 7.6% of U.S. employment. Meanwhile, the work that runs most organizations barely registers.

Management covers 11 million workers and over $1.3 trillion in economic value. It's 88% digital. It accounts for 1.4% of AI benchmarking effort. Legal work is 70% digitized and is nearly absent from how AI gets built and tested. Office and administrative support employs 18.2 million Americans, and it's largely invisible in the research that shapes AI development.

The researchers describe this pattern as a preference for "methodological convenience" over economic impact. It's easier to build an AI that writes code or retrieves a document than one that handles the ambiguous objectives and long-horizon dependencies of management. So that's what got built.

What's worth noticing here is where the gap sharpens. The researchers tracked not just work domains but the underlying skills those domains require.

AI development clusters tightly around two activities: "Getting Information" and "Working with Computers." Together, those cover less than 5% of U.S. employment. The skill category with the least investment is "Interacting with Others," which the researchers describe as "practically pervading a wide range of real-world occupations."

Coaching. Coordinating. Developing people. Advising. Resolving conflict. These skills run through almost every high-value job in the economy, and they're exactly what current generic AI tools have limitation.

The data makes the picture concrete:

For CHROs, this is a direct signal. The skills AI struggles with most are the skills that define effective management and people leadership. AI performs well when tasks are bounded and clearly specified. The layered, judgment-heavy, relational work that most leadership roles depend on sits largely outside what these tools were designed to handle.

If we step back for a moment, the domains most underserved by AI development also carry the greatest concentration of economic value. That's not an accident. It reflects where the hard problems live, and where most vendors haven't gone yet.

The reason this gap persists is structural. Management work involves ambiguous objectives, long-horizon dependencies, and relational judgment that shifts with context. Those aren't limitations that more compute solves. They require AI built specifically for that environment. At Pinnacle, that structural gap is exactly what shaped how we built Pascal. Pascal focuses on the work that's hardest to automate and most consequential to get right: helping managers think through decisions, surface blind spots, and develop the judgment their teams depend on.

The research points to three principles for where AI investment should head next. First, broader domain coverage beyond technical tasks. Second, realistic evaluation against complex and ambiguous scenarios. Third, purpose-built tools for human interaction rather than information retrieval.

The organizations that gain the most from AI over the next few years will be the ones that point AI at the work that actually drives organizational performance: the quality of management conversations, the development of people over time, the calibration of decisions that carry real stakes. These are areas where better performance compounds, and where the current generation of general-purpose AI tools is weakest.

The AI most commonly deployed today was shaped by what's easy to test in a research lab. The AI that creates lasting advantage is shaped by what matters most inside an organization. Those are usually very different things.

.png)